Chapter 2.1: MLOps Blueprint & Operational Strategy

Understand the end-to-end MLOps lifecycle and operational principles for shipping ML systems in production

MLOps Blueprint & Operational Strategy

What MLOps actually is (in one line)

MLOps = the operating system for ML in production: processes + tooling to ship, observe, govern, and continuously improve systems where code + data + models all change independently.

1) The “3 moving parts” mental model

Traditional software: code changes. ML systems: code + data + model all evolve, and any one can break the system.

Core consequence: your “release unit” is rarely just a container—it’s a pipeline + artifacts + metadata.

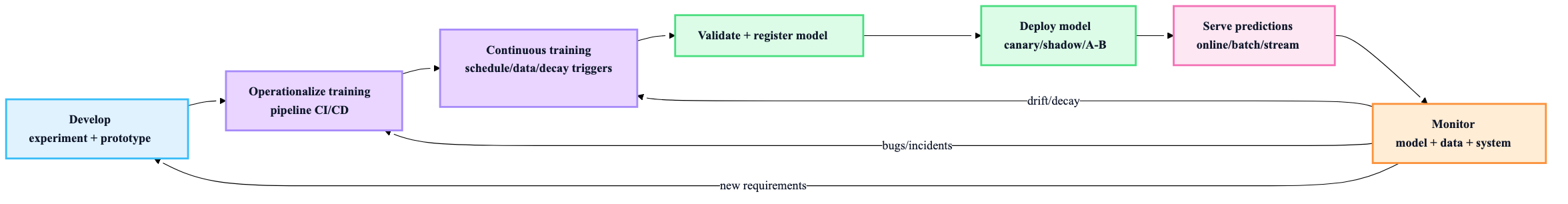

2) The end-to-end lifecycle you must plan for

If you don’t explicitly design these loops, they will emerge as outages.

Heuristic: if you can’t point to where drift is detected and how retraining is triggered and validated, you don’t have an MLOps plan yet.

3) Core goals (translate to engineering outcomes)

From “Speed, Reliability, Scalability, Collaboration, Governance” → the operational outcomes you actually design for:

- Speed: reduce time from idea → safe production experiment (lead time)

- Reliability: reproducible training + deterministic releases + rollback

- Scalability: support N models / teams without linear ops headcount

- Collaboration: clear ownership + low-friction handoffs + shared interfaces

- Governance: auditability, lineage, access controls, compliance evidence

4) Principles → concrete rules (what teams should do)

These are only useful if they become “defaults” in your system design.

Automation

- Everything repeatable becomes code: pipelines, infra, tests, deployments, backfills.

- Rule: if a runbook step is done twice, automate it.

Reproducibility

Version (code, data, features, params, env, model).

- Rule: every model in prod must be reconstructable from immutable inputs + metadata.

Continuous X (CI / CD / CT / CM)

- CI: validate code + data + training logic on every change

- CD: release pipeline & serving changes safely

- CT: retrain when triggers fire

- CM: detect degradation early and route to action

Comprehensive testing

Not just unit tests:

- data tests (schema, ranges, distribution)

- training tests (convergence sanity, leakage checks)

- model tests (metric floors, fairness checks where relevant)

- deployment tests (contract tests, canary/shadow validation)

Observability

You need “why”, not just “what”:

- metrics + logs + traces

- lineage (which data + code produced this model)

- prediction monitoring (drift, performance proxies)

5) Stack planning without tool-religion

Treat stack as capabilities, not products. A pragmatic capability map:

| Capability | You need it when… | “Start simple” default |

|---|---|---|

| Data versioning + lineage | regulated/high-risk or frequent retraining | dataset snapshots + metadata + immutable storage |

| Experiment tracking | multiple experiments/models/people | MLflow/W&B/managed equivalent |

| Feature management | training-serving skew risk is real | versioned feature code + offline/online parity plan |

| Orchestration | pipelines > scripts | managed workflows / Step Functions / Airflow / KFP |

| Model registry | >1 model or frequent releases | registry + stage transitions + approval gates |

| Serving platform | strict SLOs / scale | managed endpoints or Kubernetes (only if needed) |

| Monitoring (ML + system) | any production use | infra metrics + data drift + quality sampling |

Build vs Buy heuristic

- Buy what is undifferentiated and operationally heavy (registry/monitoring/managed orchestration).

- Build what is your differentiator (feature logic, domain labeling ops, evaluation harness, safety policies).

- Avoid building “mini-Kubeflow” accidentally.

6) MLOps maturity: pick the level you can actually sustain

Most teams fail by jumping to “Level 2” tooling without Level 1 discipline.

| Level | What it looks like | When it’s acceptable | Main risks |

|---|---|---|---|

| 0: Manual | scripts + manual deploy, little monitoring | prototypes, internal-only | irreproducible, silent decay |

| 1: Pipeline automation | orchestrated training, CT triggers, validation | 1–3 models, growing usage | pipeline changes still risky/manual |

| 2: CI/CD automation | tested pipelines + safe model releases + registry gates | multiple models/teams, real SLOs | platform complexity, process overhead |

Rule of thumb: move up a level only when the previous level’s pain is costing you real money/time weekly.

7) ADRs (Architecture Decision Records): the cheapest governance you’ll ever get

Use ADRs to stop “tribal knowledge MLOps.”

Minimum ADR template (keep it short):

- Context (what forced the decision)

- Decision (what you chose)

- Consequences (trade-offs + operational impact)

- Alternatives considered (1–2 lines)

Heuristic: if the decision affects security, cost, reliability, or long-term lock-in → ADR it.

8) Roles & collaboration: choose a model that matches maturity

Collaboration models (practical comparison)

| Model | Pros | Cons | Best fit |

|---|---|---|---|

| Separate DS vs Platform/Ops | specialization, clean ownership | handoffs, slow debugging | large orgs with platform strength |

| Full-stack DS | speed, tight iteration | unicorn dependency, brittle ops | small teams, early stage |

| Hybrid platform-enabled | leverage platform + keep DS/MLE velocity | requires platform product thinking | most companies at scale |

Default recommendation: hybrid platform-enabled as soon as you have >1 team or >2 production models.

9) The “Operational Strategy” checklist (what to decide up front)

These decisions prevent re-architecture later:

- Release unit: model-only vs pipeline+model

- Triggers for CT: schedule vs data arrival vs drift/decay thresholds

- Quality gates: metric floors, bias checks, cost/latency constraints

- Promotion policy: manual approval vs automatic + rollback

- Serving mode: online vs batch vs streaming (and why)

- Ownership: who is on-call? who can roll back?

- Monitoring scope: system + data + model + business KPI proxies

- Governance needs: audit logs, lineage, access control, retention